Impact of GDPR on AI in the Healthcare Industry

The healthcare industry has been deeply impacted by GDPR and AI, with a focus on balancing privacy and progress. It's impressive to see how GDPR has the potential to affect patient data privacy, industry innovations, and personalized healthcare solutions.

Staff

The integration of Artificial Intelligence (AI) into the healthcare sector holds immense promise for transforming patient care, diagnostics, and operational efficiency. However, this transformative potential is profoundly shaped and, at times, constrained by the stringent requirements of the General Data Protection Regulation (GDPR). As health data is categorized as 'sensitive personal data,' its processing by AI systems is subject to elevated scrutiny, demanding robust legal bases, meticulous adherence to core data protection principles, and advanced technical safeguards. This report delves into the intricate relationship between GDPR and healthcare AI, highlighting critical challenges related to data collection, explainability, interoperability, and cross-border transfers. It explores how privacy-preserving technologies are enabling compliant innovation and examines the evolving regulatory landscape, including the interplay with the EU AI Act and real-world enforcement trends. Ultimately, successful AI adoption in healthcare hinges on a proactive, privacy-by-design approach that prioritizes patient trust, ethical considerations, and continuous regulatory compliance.

1. Introduction: The Converging Worlds of Healthcare AI and GDPR

The healthcare industry stands on the precipice of a technological revolution, largely driven by the advancements in Artificial Intelligence. From predictive analytics for disease outbreaks to personalized treatment regimens, AI offers unprecedented opportunities to enhance patient outcomes and streamline medical operations. Simultaneously, the General Data Protection Regulation (GDPR) has established a formidable legal framework governing the processing of personal data within the European Union (EU), with significant extraterritorial reach. The intersection of these two powerful forces—innovative AI and stringent data protection—is particularly complex in healthcare, where the data involved is inherently sensitive and intimately tied to an individual's fundamental rights.

1.1 Defining "Sensitive Health Data" under GDPR: A Foundation for Compliance

The General Data Protection Regulation defines 'personal data' broadly as any information relating to an identified or identifiable natural person, encompassing identifiers such as names, identification numbers, or location data. Within this broad scope, GDPR designates certain categories of personal data as 'sensitive' or 'special category data,' subjecting them to significantly stricter processing conditions. This special category includes genetic data, biometric data used for unique identification, and, most critically for the healthcare sector, 'health-related data'.

'Data concerning health' is explicitly defined as personal data related to an individual's physical or mental health, including information derived from the provision of healthcare services, which reveals details about their health status. This encompasses a wide array of information, from a patient's medical history, diagnoses, and treatments to medical opinions, results of health tests, and even data collected from fitness trackers.

The processing of such sensitive data is, in principle, prohibited unless a specific exemption outlined in Article 9 of the GDPR applies. This prohibition is rooted in the understanding that processing these types of data can involve "severe and unacceptable risks to fundamental human rights and freedoms". Unauthorized disclosure of health data, which touches upon a person's most intimate sphere, carries the potential for various forms of discrimination and violations of fundamental rights.

The classification of health data as a 'special category' immediately elevates its regulatory burden. This designation means that any AI system handling such information inherently carries a higher risk profile compared to AI systems processing less sensitive personal data. The potential for harm from misuse or breach of health data is significantly greater, extending beyond mere privacy violations to encompass potential discrimination, social stigmatization, and even physical harm through misdiagnosis or inappropriate treatment. Therefore, the foundational sensitivity of the data dictates a much higher bar for AI development and deployment from the outset, demanding more robust safeguards and meticulous compliance.

A crucial nuance in GDPR compliance for healthcare AI is the principle that the actual risk of data processing often depends less on the inherent content of the data and more on the context in which it is used. While health data is always sensitive, its practical risk level is dynamically influenced by how it is processed, by whom, for what purpose, and with what safeguards. For instance, anonymized health data used for purely statistical research might pose lower immediate risks than identifiable health data used for automated diagnostic decisions that directly impact individuals. This implies that compliance strategies for healthcare AI must be dynamic, adaptive, and context-aware, rather than a static, one-size-fits-all checklist. It necessitates a continuous assessment of risk, pushing organizations to conduct thorough Data Protection Impact Assessments (DPIAs) for each specific AI application and its evolving use cases. When processing sensitive data, organizations must prioritize exploring less intrusive alternatives, ensuring the lawfulness of processing, identifying applicable exemptions, and implementing additional safeguards such as pseudonymisation and encryption.

1.2 The Transformative Promise of AI in Healthcare

Artificial Intelligence is rapidly reshaping the healthcare landscape, offering profound opportunities for advancement and addressing long-standing challenges. AI systems are poised to deliver smart diagnostics, personalize treatment plans, and significantly improve overall patient outcomes. Specific applications span a wide spectrum, from powering advanced diagnostic tools and developing predictive models for disease progression or patient admissions to streamlining administrative tasks, thereby reducing the burden on healthcare professionals.

Beyond clinical applications, AI can accelerate drug discovery and optimize clinical trial designs by efficiently analyzing vast biomedical datasets, leading to faster development cycles and reduced costs. It enhances diagnostic accuracy, enabling earlier detection of critical conditions like sepsis or rare diseases, which can be life-saving. The ability of AI algorithms to rapidly process immense volumes of medical data aids in early disease detection and significantly reduces diagnostic errors. These advancements contribute to more efficient resource allocation within healthcare systems and improved access to quality care, particularly in underserved areas.

However, the transformative potential of AI in healthcare is intrinsically linked to its capacity to process large quantities of data. This creates a fundamental tension with GDPR, which, through its stringent principles of data minimization and purpose limitation, inherently restricts this "data-hungry" characteristic of AI. The very mechanism that makes AI powerful—access to extensive, diverse datasets—is precisely what GDPR seeks to control most stringently, especially for sensitive health data. This tension is not merely a compliance hurdle; it represents a strategic challenge for healthcare innovators, compelling them to devise novel, privacy-preserving approaches to data utilization that balance AI's utility with regulatory requirements.

1.3 Overview of GDPR's Foundational Principles for AI

The GDPR’s foundational principles are designed to ensure that personal data is processed lawfully, fairly, and transparently, and that data subjects’ rights are protected. When applied to AI systems, these principles become especially critical, governing every stage of AI development and deployment.

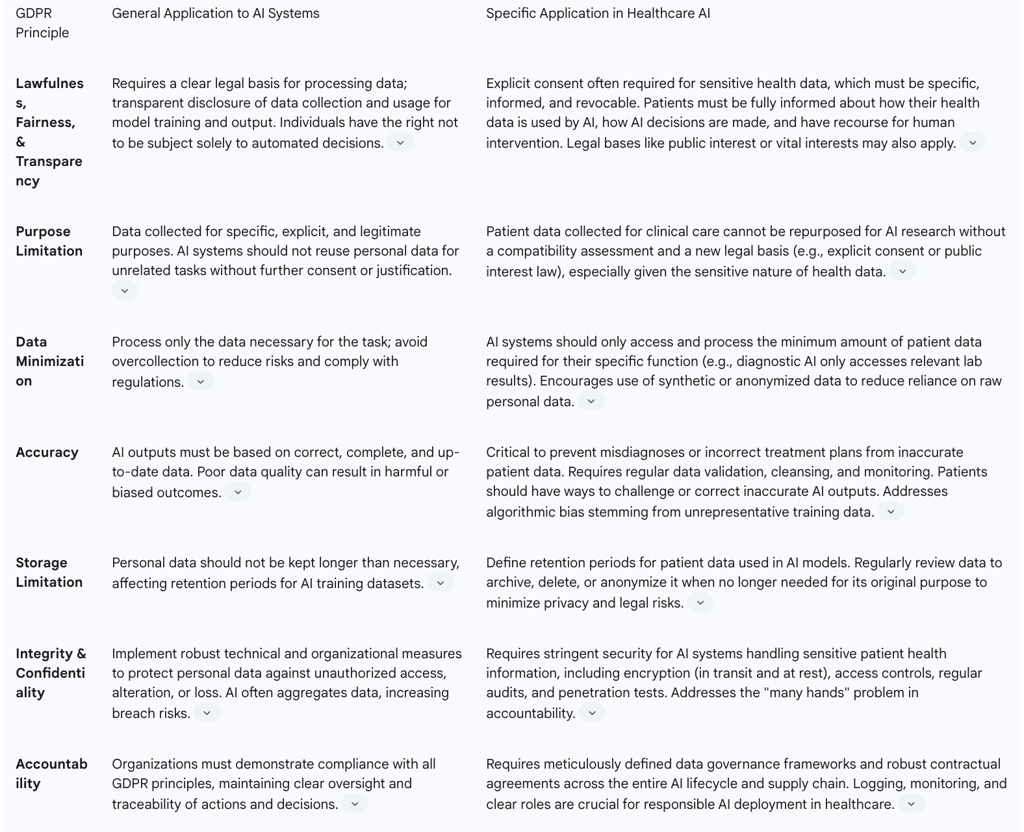

The core GDPR principles that directly impact AI operations include:

Lawfulness, Fairness, and Transparency: AI systems must operate with a clear legal basis for processing personal data, and organizations must openly disclose how data is collected and used.

Purpose Limitation: Data must be collected for specific, explicit, and legitimate purposes, preventing its reuse for unrelated tasks without further consent or justification.

Data Minimization: Only the data strictly necessary for the intended purpose should be processed, avoiding overcollection to reduce risks.

Accuracy: AI outputs must be based on correct, complete, and up-to-date data to prevent harmful or biased outcomes.

Storage Limitation: Personal data should not be retained longer than necessary, requiring defined retention periods for AI training datasets.

Integrity and Confidentiality: Robust technical and organizational measures must be implemented to protect personal data against unauthorized access, alteration, or loss.

Accountability: Organizations are responsible for demonstrating compliance with all GDPR principles, maintaining clear oversight and traceability of actions and decisions.

These principles apply comprehensively across the entire lifecycle of AI, from the initial collection of data for model training to the generation of model output and inferences. This holistic application underscores GDPR's role as more than just a legal compliance framework; it serves as a foundational ethical framework for AI development and deployment. The principles of lawfulness, fairness, transparency, accuracy, and accountability inherently guide AI development towards more ethical, responsible, and trustworthy systems. For instance, the accuracy principle directly addresses concerns about algorithmic bias and the potential for harmful outcomes, while transparency supports the ethical imperative of explainability, fostering trust in AI systems. Adhering to GDPR principles thus inherently pushes AI development towards more ethical, responsible, and trustworthy systems, particularly in sensitive sectors like healthcare where the impact on individuals is profound.

2. Core GDPR Principles: Direct Impact on Healthcare AI Operations

The foundational principles of GDPR are not merely theoretical constructs; they translate into concrete operational requirements that profoundly influence how AI systems can be developed, deployed, and managed within the healthcare industry. The sensitive nature of health data amplifies the importance of each principle, demanding meticulous attention to detail and proactive compliance strategies.

2.1 Lawfulness, Fairness, and Transparency: Establishing Legal Bases and Informing Data Subjects

For AI systems operating in healthcare, establishing a clear and valid legal basis for processing personal data is a non-negotiable prerequisite. Given that health data falls under the 'special categories' of personal data, explicit consent from the data subject is frequently the primary and most robust legal basis required. This consent must be "freely given, specific, informed and unambiguous," and individuals must be clearly informed of their right to withdraw consent at any time, with mechanisms to facilitate such withdrawal.

Beyond explicit consent, other legal bases for processing sensitive health data exist under specific conditions. These include situations where processing is necessary for employment, social security, and social protection law, to protect the vital interests of a data subject (especially in emergencies), for reasons of substantial public interest (particularly in public health contexts like preventing communicable diseases), or when processing is carried out by health professionals subject to professional secrecy for the provision of health or social care.

The principle of transparency mandates that organizations clearly and comprehensively disclose how they collect and use data, including its application for AI model training and output. Patients must be fully informed about the specific ways their data is utilized and how AI-driven decisions are made, with accessible recourse mechanisms for human intervention if an automated decision significantly affects them. This also entails providing meaningful information about the logic involved in any automated decision-making process.

A significant operational challenge for AI development in healthcare stems from the requirement for explicit and informed consent, coupled with the individual's right to withdraw that consent at any time. AI models, particularly those that learn and adapt, thrive on large, stable datasets for training and continuous improvement. If data subjects can withdraw their consent, requiring the removal of their data from training sets, it could necessitate costly and complex retraining of models. This administrative and technical burden can lead to a phenomenon often termed "consent fatigue" among data controllers, potentially disincentivizing the use of real patient data or pushing developers towards less effective, non-personal data sources, thereby hindering the very innovation AI promises in healthcare.

Furthermore, while GDPR's emphasis on transparency is intended to build trust between data subjects and data controllers, it collides with the "black box" nature of many advanced AI models, especially deep learning systems, which are "not easily interpretable". The legal mandate to explain "how the decision was made" or provide "meaningful information about the logic involved" becomes a significant technical hurdle. This implies that transparency is not merely a legal checkbox but a fundamental engineering challenge. Overcoming this challenge through Explainable AI (XAI) techniques (as discussed in Section 3) is crucial for fostering patient and clinician trust, which is essential for the widespread adoption and effectiveness of AI in healthcare.

2.2 Purpose Limitation and Data Minimization: Balancing Innovation with Necessity

The principle of purpose limitation is a cornerstone of GDPR, dictating that personal data must be collected for "specific, explicit, and legitimate purposes" and should not be further processed in a manner incompatible with those initial purposes. This means that AI systems in healthcare cannot simply repurpose patient data collected for one clinical purpose (e.g., diagnosis) for an entirely different AI development task (e.g., drug discovery) without a new, valid legal basis or explicit consent.

Complementing this is the principle of data minimization, which requires organizations to process "only the data necessary for the task". While AI models often achieve optimal performance when trained on large datasets, organizations must carefully assess what data is truly essential to avoid overcollection and reduce associated privacy risks.

The tension between AI's "data hunger" and GDPR's "data lean" principles is a significant challenge. AI models, particularly those employing deep learning, typically achieve optimal performance when trained on vast and diverse datasets. However, GDPR's principles of data minimization and purpose limitation directly challenge this approach. This compels a re-evaluation of traditional AI development methodologies in healthcare. Instead of simply collecting as much data as possible, organizations are forced to strategically assess data necessity and explore innovative approaches like synthetic data generation or federated learning that allow AI to learn from data patterns without directly processing or centralizing large volumes of identifiable patient information. This paradox is not merely a compliance burden but a driving force for innovation in privacy-preserving AI techniques.

Reusing patient data, often initially collected for primary clinical care, for AI training in scientific research presents a particular legal minefield. While scientific research is generally considered a compatible secondary use of data under GDPR, provided appropriate safeguards are in place , the "nature of the data" being highly sensitive health information significantly "narrows the scope for compatibility". This means that healthcare organizations cannot simply assume that existing patient data can be automatically repurposed for AI research or development. Each new AI project or research endeavor requires a rigorous, documented compatibility assessment. If the secondary use is deemed incompatible with the initial purpose, data reuse is prohibited unless based on new explicit consent or a specific public interest law, such as those related to public health or scientific research under Article 9(2). This adds substantial legal and administrative overhead to AI research in healthcare, requiring careful planning and legal counsel.

2.3 Accuracy and Data Quality: Ensuring Reliable AI Outcomes

The accuracy principle under GDPR mandates that personal data used in AI systems must be "correct, complete, and up to date". This requirement is of paramount importance because poor data quality can directly lead to "harmful or biased outcomes". Organizations are legally obligated to ensure data accuracy and to correct any errors promptly. This necessitates implementing processes for regular data validation, cleansing, and continuous monitoring of data quality throughout the AI lifecycle.

In the healthcare context, this principle is elevated to a critical patient safety mandate. The consequences of inaccurate AI outputs are severe, potentially leading to "misdiagnoses or incorrect treatment plans" and direct harm to patients. This requires not only rigorous data validation and cleansing during the AI model's training phase but also continuous monitoring and re-validation of AI models in deployment to ensure their inferences remain accurate and reliable over time. Furthermore, patients should be provided with clear mechanisms to challenge or correct any inaccurate outputs generated by AI systems that pertain to them.

The snippets explicitly link "poor data quality" to "harmful or biased outcomes". Real-world examples, such as the COMPASS algorithm disproportionately flagging Black defendants in criminal justice or Optum's healthcare algorithm disadvantaging Black patients due to being trained on healthcare spending rather than needs, demonstrate how biased training data can lead to discriminatory results. This illustrates a critical connection: GDPR's accuracy principle is intrinsically tied to algorithmic fairness. If the underlying training data is unrepresentative or contains historical biases, the AI model will inevitably perpetuate and even amplify those biases, leading to unfair or discriminatory outcomes that violate GDPR's fairness principle and broader ethical guidelines. Therefore, ensuring accuracy requires not just technical data cleansing but also a deep understanding of data representativeness and the implementation of sophisticated bias detection and mitigation strategies throughout the AI system's design, development, and deployment.

2.4 Integrity, Confidentiality, and Accountability: Securing Sensitive Health Data

The principles of integrity and confidentiality demand that organizations implement robust technical and organizational measures to protect personal data against unauthorized access, alteration, or loss. This is particularly crucial for AI systems in healthcare, which frequently aggregate data from multiple sources, thereby increasing the risks of data breaches and unauthorized access. Key security measures include comprehensive encryption of data, both in transit and at rest , and the implementation of strict access controls to limit data exposure to authorized personnel only. These security measures must be proportionate to the sensitivity and volume of the data being processed. Regular audits, penetration tests, and continuous employee training are also essential to strengthen data protection, identify vulnerabilities, and ensure that security protocols are effective and up-to-date.

The principle of accountability places the responsibility on organizations to demonstrate compliance with all GDPR principles. This requires maintaining robust accountability mechanisms, such as detailed logging of data processing activities, continuous monitoring of AI system behavior, and clearly defined roles and responsibilities for data handling and oversight. These mechanisms enhance trust in AI systems, support regulatory compliance, and foster responsible AI deployment.

The "many hands" problem, where numerous stakeholders (AI developers, healthcare providers, data processors, cloud vendors) contribute to the development and deployment of AI systems, complicates the assignment of accountability when adverse outcomes occur. GDPR's accountability principle mandates that controllers must demonstrate compliance and define clear roles and responsibilities. When combined with the "many hands" problem, this means that healthcare AI projects require meticulously defined data governance frameworks and robust contractual agreements that explicitly assign data protection responsibilities across the entire AI lifecycle and supply chain. This extends beyond internal organizational structure to rigorous vendor management and third-party risk assessment.

Furthermore, while GDPR emphasizes "robust technical and organizational measures" and "stringent security for AI systems handling sensitive patient health information" , it is crucial that security is not an afterthought. Security must be integrated into every stage of AI development, deployment, and operation. This necessitates a comprehensive "security by design" approach, mirroring the "privacy by design" principle. It is a continuous process involving not just the initial implementation of encryption and access controls, but also regular audits, penetration tests, and ongoing monitoring to adapt to evolving threats. This represents a critical shift from reactive security measures to a proactive, lifecycle-integrated security posture for healthcare AI.

Table 1: GDPR Principles and Their Application to Healthcare AI

3. Automated Decision-Making and the Imperative of Explainability

The rise of AI in healthcare introduces complex questions regarding automated decision-making, particularly when these decisions significantly affect individuals' health outcomes. GDPR, through Article 22 and Recital 71, seeks to empower data subjects with rights related to understanding and challenging such automated processes, imposing a significant challenge on the often opaque nature of AI.

3.1 Article 22: The Right Against Solely Automated Decisions in Healthcare

Article 22 of the GDPR grants data subjects the fundamental right not to be subject to a decision based solely on automated processing, including profiling, if that decision produces legal effects concerning them or similarly significantly affects them. In healthcare, this provision is highly relevant for AI systems involved in critical functions such as automated diagnoses, treatment recommendations, or patient management, where an AI's decision could have profound impacts on an individual's life and well-being.

However, this right is not absolute. Paragraph 1 of Article 22 does not apply if the decision is: (a) necessary for entering into, or performance of, a contract between the data subject and a data controller; (b) authorized by Union or Member State law to which the controller is subject and which also lays down suitable measures to safeguard the data subject's rights and freedoms and legitimate interests; or (c) based on the data subject's explicit consent.

Even when these exceptions apply, the data controller is mandated to implement suitable safeguards to protect the data subject's rights and legitimate interests. These safeguards must, at a minimum, include the right to obtain human intervention on the part of the controller, the right to express one's point of view, and the right to challenge the decision. Furthermore, decisions based solely on special categories of personal data (such as health data) are generally prohibited under Article 9(1), unless explicit consent or a substantial public interest basis applies, and suitable measures to safeguard data subject rights are in place. This means that for AI in healthcare, relying on automated decisions concerning sensitive health data requires even more rigorous justification and robust protective measures.

The emphasis on transparency in GDPR, intended to build trust between data subjects and data controllers, directly confronts the "black box" nature of many advanced AI models. These models, particularly deep learning systems, are often "not easily interpretable". The legal mandate to explain "how the decision was made" or provide "meaningful information about the logic involved" becomes a significant technical hurdle. This implies that transparency is not merely a legal checkbox but a fundamental engineering challenge. Overcoming this challenge through Explainable AI (XAI) techniques is crucial for fostering patient and clinician trust, which is essential for the widespread adoption and effectiveness of AI in healthcare.

3.2 Recital 71 and the "Right to Explanation": Demystifying AI's Clinical Judgments

Recital 71 of the GDPR emphasizes the importance of transparency and the right of data subjects to understand the meaning and logic behind automated decisions that significantly affect them. This has often been interpreted as a "right to explanation" in the context of algorithms and AI. The general concept of a right to explanation refers to an individual's right to be given an explanation for an output of an algorithm, particularly for decisions that produce legal or similarly significant effects.

However, the extent to which the GDPR truly provides a comprehensive "right to explanation" is a subject of ongoing debate. Critics argue that this right is narrowly defined within the GDPR's binding articles, primarily stemming from Article 22, which focuses on decisions "solely" based on automated processing. This limitation means it may not cover many algorithmic controversies where human involvement, even if minimal, is present. Furthermore, Recitals, while offering interpretative guidance, are not legally binding, and the explicit "right to an explanation" was reportedly removed from the binding articles during the legislative process. Article 15, the "right of access," allows access to "meaningful information about the logic involved" in automated decision-making, but its practical application faces challenges.

Despite these debates, the spirit of explainability remains central to responsible AI development, especially in healthcare. The European Union's Artificial Intelligence Act (AI Act), a more recent legislative instrument, provides a clearer and more explicit "right to explanation of individual decision-making" in Article 86 for certain high-risk AI systems that produce significant, adverse effects on an individual's health, safety, or fundamental rights. This right mandates "clear and meaningful explanations of the role of the AI system in the decision-making procedure and the main elements of the decision taken".

The technical challenges of achieving explainability for "black box" AI models in healthcare remain substantial. These systems are often complex and difficult to interpret, making it challenging to provide clear, human-understandable justifications for their clinical judgments. Yet, fostering trust in AI systems within healthcare is paramount, and this trust hinges on the ability of patients and clinicians to comprehend and, if necessary, contest AI-driven recommendations. Therefore, the pursuit of explainable AI (XAI) techniques is not just a regulatory compliance measure but a fundamental requirement for ethical and effective AI adoption in the medical field.

3.3 Challenges of Explainable AI (XAI) in High-Stakes Healthcare Contexts

The implementation of Explainable AI (XAI) in healthcare, while crucial for GDPR compliance and patient trust, faces significant inherent challenges. Many advanced AI models, particularly deep learning systems, are often referred to as "black boxes" because their internal workings are complex and "not easily interpretable". This opacity makes it difficult to understand how they arrive at specific diagnoses, treatment recommendations, or risk predictions.

The challenges of XAI in high-stakes healthcare contexts include:

Balancing Performance with Transparency: There is often a trade-off between the accuracy and performance of complex AI models and their interpretability. Highly accurate "black box" models may be difficult to explain, while simpler, more explainable models might sacrifice some predictive power.

Algorithmic Bias: AI models learn from historical data, which may contain biases reflecting societal inequities or unrepresentative patient populations. If AI-driven systems are trained on biased datasets, they can inadvertently discriminate against certain patient groups, leading to disparities in care. Explaining decisions made by biased algorithms is complex and requires identifying and mitigating these underlying biases.

Re-identification Risks: While AI can help anonymize patient data, the process is not foolproof. Advanced de-anonymization techniques can potentially re-identify individuals from supposedly anonymous datasets, posing significant privacy risks. This risk is heightened when attempting to provide detailed explanations that might inadvertently reveal sensitive information.

Ethical Considerations: Beyond legal compliance, XAI in healthcare raises profound ethical questions. Ensuring that AI tools comply with ethical guidelines regarding patient consent and data usage is paramount. The misuse of generative AI tools, for instance, poses risks like falsification of medical records, misinformation, and privacy violations.

Tailoring Explanations for Diverse Stakeholders: Different users of healthcare AI systems require different levels and types of explanations. End-users (patients) need clear, intuitive explanations to build trust, while AI developers need detailed technical insights for debugging and refinement. Clinicians require explanations that integrate with their medical expertise, and regulators need audit trails and clear documentation to ensure compliance. Tailoring these explanations without overwhelming or misinforming the audience is a complex task.

Implementing XAI is crucial for detecting and addressing bias, ensuring accountability, and fostering trust in AI systems. Without adequate explainability, healthcare providers may be hesitant to adopt AI tools, and patients may lack confidence in AI-driven decisions, ultimately hampering the transformative potential of AI in medicine.

4. Navigating Data Challenges in Healthcare AI Development

The development of AI in healthcare is inherently data-intensive, yet it operates within a highly regulated environment where patient data is considered exceptionally sensitive. This creates significant challenges related to data collection, sharing, and cross-border transfers, demanding innovative solutions and meticulous compliance strategies.

4.1 Data Collection and Training Limitations: Consent, Volume, and Re-identification Risks

AI models, particularly those employing deep learning, require vast amounts of data for effective training and validation. However, GDPR mandates explicit, informed, and easily revocable consent for the processing of personal data, especially sensitive health data. This presents a significant hurdle, as obtaining and managing ongoing consent for large, evolving datasets can be administratively complex and costly. If patients withdraw consent, their data must be removed, potentially necessitating expensive retraining of AI models. This tension between AI's data hunger and GDPR's data minimization principle, which requires processing only the necessary data , forces developers to seek alternative data strategies.

A critical concern is the risk of re-identification, even when data is ostensibly anonymized. While GDPR allows for truly anonymized data to be used without restrictions, achieving absolute anonymization, particularly for complex health data like medical images or genetic information, is "difficult, if not impossible". Advanced de-anonymization techniques can potentially re-identify individuals from supposedly anonymous datasets, posing significant privacy risks. The European Data Protection Board (EDPB) adopts a narrow interpretation of anonymization, emphasizing that anonymity claims will face rigorous scrutiny. To be considered anonymous, the likelihood of re-identification must be "not reasonably likely," implying a very low, but not necessarily zero, risk under current technological and contextual conditions. This means organizations must continuously test for vulnerabilities and prove their models can withstand real-world attacks.

Furthermore, if an AI model is developed with unlawfully processed personal data, its subsequent deployment—and any downstream system built upon it—could also be deemed unlawful. This poses a major risk to the entire AI value chain, as a single non-compliant model can undermine entire ecosystems. Therefore, "Privacy-by-design" is essential to prevent legal and operational risks in AI development.

4.2 Data Interoperability and Sharing: Overcoming Silos under Strict Privacy Rules

The seamless flow of patient information across different systems is a major challenge in healthcare, profoundly impacting AI development. Despite the shift to Electronic Medical Records (EMRs), patient data often remains "locked within different platforms," creating data silos. This lack of interoperability stems from several factors, including inconsistent data formats (e.g., structured fields vs. free text), the use of proprietary systems that restrict data sharing, and variations in coding terminologies (e.g., ICD-10, SNOMED, LOINC).

These interoperability challenges directly impede AI training and the ability of healthcare providers to obtain a comprehensive view of a patient's history. AI models thrive on diverse and extensive datasets, and fragmented data limits their effectiveness and generalizability. While interoperability aims to improve data sharing, it must not compromise patient privacy. Healthcare data is highly sensitive, and strict regulations like GDPR (and HIPAA in the US) impose stringent conditions on how it is shared. Key concerns include ensuring shared data remains encrypted and securely transmitted, managing patient consent for data sharing across multiple platforms, and preventing unauthorized access to protected health information.

The GDPR's purpose limitation principle further complicates data sharing for AI research. Patient data, initially collected for primary clinical purposes, often needs to be repurposed for AI training. While scientific research is generally considered a compatible secondary use, the highly sensitive nature of health data significantly narrows the scope for such compatibility. This means that each new AI research endeavor requires a rigorous compatibility assessment and potentially a new legal basis, adding substantial legal and administrative overhead to data sharing initiatives. Overcoming these silos requires not only technical standardization but also robust data governance frameworks that balance data utility with privacy protection.

4.3 Cross-Border Data Transfers: Article 46 Safeguards for Global Healthcare AI

The global nature of AI development and healthcare research often necessitates the transfer of personal data across international borders. GDPR imposes stringent conditions on the transfer of personal data outside the European Economic Area (EEA), requiring that the destination country ensures an "essentially equivalent" level of data protection to that provided within the EU. This is particularly critical for healthcare AI, where sensitive patient data may be processed by AI models or stored on servers located in different jurisdictions.

To facilitate such transfers while maintaining compliance, GDPR provides several mechanisms under Article 46 ("Transfers subject to appropriate safeguards"). These include:

Adequacy Decisions: Where the European Commission recognizes that a third country offers a sufficient level of data protection.

Standard Contractual Clauses (SCCs): Pre-approved contractual clauses that impose GDPR-level data protection obligations on the data importer.

Binding Corporate Rules (BCRs): Internal rules adopted by multinational corporations for transfers within their group, approved by supervisory authorities.

Approved Codes of Conduct or Certification Mechanisms: Coupled with binding and enforceable commitments.

Following landmark rulings like Schrems II, organizations must conduct a Transfer Impact Assessment (TIA) when relying on SCCs or BCRs. This assessment involves evaluating the legal and practical landscape of the recipient country, including its laws on government surveillance and enforcement mechanisms, to determine if supplementary safeguards are needed to ensure an "essentially equivalent" level of protection.

For cloud-based and decentralized AI infrastructures, which are common in global healthcare AI development, mapping these data flows and ensuring compliance with cross-border transfer regulations adds significant complexity. Technical safeguards play a crucial role in mitigating residual risks identified in TIAs. Strong encryption of data at rest and in transit, along with pseudonymization and strict access controls, can significantly reduce the risks associated with international data transfers, even when SCCs or BCRs alone may not fully address them. Transparency with data subjects about where their data is transferred and who has access to it is also paramount for building trust and ensuring compliance.

5. Privacy-Preserving Technologies: Enabling Compliant AI Innovation

The tension between AI's data demands and GDPR's stringent privacy requirements has spurred significant innovation in privacy-preserving technologies (PPTs). These technologies offer crucial pathways for healthcare organizations to develop and deploy AI solutions while maintaining robust data protection and ensuring GDPR compliance.

5.1 Anonymization vs. Pseudonymization: Strategic Data Transformation

In the context of GDPR compliance for healthcare AI, understanding the distinction between anonymization and pseudonymization is fundamental for strategic data transformation.

Anonymization involves the permanent removal of all identifying information from data, making re-identification of individuals impossible. Truly anonymized data falls outside the scope of GDPR, meaning it can be processed without the strict requirements applicable to personal data. However, achieving absolute anonymization, especially for complex health data like medical images or genetic information, is "difficult, if not impossible". The European Data Protection Board (EDPB) emphasizes that anonymity claims will face rigorous scrutiny, requiring adequate evidence that personal data cannot be extracted with reasonable means and that the model's output does not pertain to the individuals whose data was used for training. A "risk-based anonymization" approach, which aims to reduce re-identification risk to an acceptable threshold, has emerged as a practical solution, supported by EU case law.

Pseudonymization is a more flexible approach where identifiable information is replaced with artificial identifiers or unique codes, while the possibility of re-identification is maintained through additional information kept separately and securely. Pseudonymized data is still considered personal data under GDPR, requiring continued compliance with privacy laws and data subject rights. However, it significantly minimizes privacy risks, making it particularly suitable for longitudinal studies and medical research where tracking patient data over time is necessary without direct identification. Technical measures like key-coding data or employing encryption with a secret key are common techniques.

Both methods, when implemented effectively, are recognized by GDPR as means to mitigate risks in processing personal data. The choice between them depends on the specific use case, the acceptable level of re-identification risk, and the need to preserve data utility.

5.2 Federated Learning: Collaborative AI Training without Centralized Data

Federated Learning (FL) is a distributed machine learning approach that directly addresses the challenge of data centralization and privacy in healthcare AI. In an FL setup, AI models are trained across decentralized data sources, meaning that raw patient data never leaves the local servers or devices of individual healthcare institutions. Instead of sharing sensitive raw data, only encrypted model updates or learned parameters are sent to a central system for aggregation.

This approach offers significant benefits for GDPR compliance in healthcare:

Enhanced Data Privacy and Security: By keeping patient data localized, FL minimizes the risk of unauthorized data access, data leaks, and legal issues associated with centralizing sensitive information.

Compliance with Privacy Laws: FL helps healthcare organizations adhere to privacy regulations like GDPR (and HIPAA in the US) by limiting centralized storage risks and ensuring that electronic protected health information (ePHI) remains within each facility's own security systems.

Improved Collaboration and Accelerated Research: FL breaks down data silos, enabling multiple hospitals and research centers to collaborate on AI model development using diverse datasets without compromising their individual data security. This improves AI model quality and accelerates research, particularly for rare diseases where data is scarce.

However, challenges remain for FL implementation. While it addresses many legal, security, and privacy concerns, some privacy problems can still exist, such as the potential for information to leak from the model updates themselves, or issues related to trust among collaborating partners. To mitigate these residual risks, FL is often complemented by other privacy-preserving tools such as differential privacy, secure multiparty computation, and additional encryption. Despite these challenges, federated learning represents a powerful solution for balancing AI innovation with strict data privacy requirements in healthcare.

5.3 Homomorphic Encryption: Computing on Encrypted Health Data

Homomorphic Encryption (HE) represents a groundbreaking advancement in privacy-preserving technologies, enabling computations to be performed directly on encrypted data without the need for decryption. This means that data remains encrypted throughout its lifecycle—at rest, in transit, and even during processing—eliminating a critical vulnerability point where traditional encryption methods fall short.

For healthcare AI, HE offers transformative potential for secure collaboration and data sharing:

Secure Medical Research: HE allows multiple hospitals and research institutions to collaborate on studies and analyze vast amounts of health data (e.g., patient records, medical images) to identify trends or optimize treatment plans, all while the underlying data remains encrypted. This facilitates the creation of more comprehensive datasets for AI and machine learning models, improving the accuracy and utility of predictions without exposing sensitive patient information.

Genomic Data Analysis: Given the extreme sensitivity of genetic information, HE provides a crucial mechanism for securely processing genomic data, which is vital for advancements in personalized medicine and understanding disease predispositions.

Confidential AI-as-a-Service: HE enables AI models to process encrypted data in cloud environments without compromising privacy, a significant advantage for healthcare organizations leveraging cloud computing for AI applications.

Secure Inference: AI models can make predictions or provide insights on encrypted data, ensuring that sensitive patient data remains private even when AI is providing diagnostic or treatment recommendations.

Homomorphic encryption significantly enhances GDPR compliance by ensuring that accessed data is unusable and cannot be tampered with or poisoned, even in the event of a breach. It enables secure collaboration and adherence to various privacy acts and international standards. However, HE is computationally intensive, can lead to slower performance, and requires specialized expertise for its implementation, posing practical challenges for widespread adoption. Despite these limitations, fully homomorphic encryption, which allows for an infinite number of operations on encrypted data, holds immense promise for the future of privacy-preserving AI in healthcare.

5.4 Differential Privacy and Synthetic Data: Enhancing Privacy Guarantees

Beyond anonymization and pseudonymization, Differential Privacy (DP) and Synthetic Data Generation are advanced privacy-preserving technologies that offer robust solutions for enabling GDPR-compliant AI in healthcare.

Differential Privacy (DP): Differential privacy is a mathematical framework that adds controlled, quantifiable noise to datasets or query results before they are used to train AI systems or for aggregate analysis. This "noise" makes it difficult to extract sensitive information about any single individual from the query results or the AI model, even if an attacker has auxiliary information.

Key benefits of DP in healthcare AI for GDPR compliance include:

Strong Privacy Guarantees: DP provides a quantifiable and provable privacy guarantee, making it highly resistant to re-identification attacks.

Enabling Aggregate Analysis: It allows for the analysis of patient records and drug efficacy assessment while ensuring patient confidentiality, even when training AI models on sensitive data like medical images.

Fostering Trust: Individuals may be more willing to provide accurate and detailed information when confident that their privacy is protected.

Compliance Support: DP directly helps organizations comply with data privacy regulations like GDPR.

However, DP has limitations, including potentially reduced data quality and accuracy, especially in small datasets, increased technical complexity, and computational overhead. Multiple queries on the same dataset can also weaken privacy guarantees.

Synthetic Data: Synthetic data refers to artificially generated datasets that mimic the statistical properties and relationships of real-world patient data without containing any sensitive or identifiable information from actual individuals.

Its role in achieving GDPR compliance for healthcare AI is significant:

Overcoming Data Scarcity and Privacy Concerns: Synthetic data addresses the challenge of acquiring sufficient volumes of high-quality, real-world data for AI training due to privacy regulations and data scarcity, particularly for rare diseases.

Facilitating Data Sharing and Collaboration: It enables secure data sharing and collaboration among researchers and institutions across jurisdictions without compromising patient confidentiality, as it contains no personal data.

Mitigating Re-identification Risks: When properly generated, synthetic data significantly reduces the potential for data misuse and patient re-identification compared to using authentic data.

Ethical Advantages: It offers ethical benefits by reducing consent-related issues and allowing privacy-preserving simulations that yield similar results to original data without direct access to personal health information.

Enhancing AI Quality: Synthetic data can be generated to be diverse and free from biases present in real-world data, leading to more accurate and inclusive AI models. Common generation methods include rule-based approaches, statistical modeling, Generative Adversarial Networks (GANs), and Synthetic Minority Over-sampling Technique (SMOTE).

Synthetic data generation acts as a privacy-preserving approach that allows healthcare AI to flourish within the strict confines of GDPR by providing a mechanism for data utility without compromising individual privacy.

5.5 Privacy by Design and Data Protection Impact Assessments (DPIAs): Proactive Compliance

Achieving GDPR compliance in healthcare AI necessitates a proactive and integrated approach, with Privacy by Design and Data Protection Impact Assessments (DPIAs) serving as foundational pillars.

Privacy by Design: This principle mandates that privacy and data protection measures are embedded into the design and architecture of AI systems from the very outset, rather than being added as an afterthought. For AI models to comply with GDPR, privacy must be "baked in from the start". This involves considering data protection requirements at every stage of the AI lifecycle, from data collection and model training to deployment and decommissioning. It ensures that data minimization, purpose limitation, and security measures are inherent to the system's functionality.

Data Protection Impact Assessments (DPIAs): DPIAs are mandatory under GDPR for processing operations that are "likely to result in a high risk to the rights and freedoms of natural persons". Given the sensitive nature of health data and the complexity of AI systems, DPIAs are almost always required for healthcare AI projects.

A DPIA is a systematic process designed to:

Identify and Assess Privacy Risks: It helps organizations identify potential privacy risks associated with a new AI technology or data processing operation.

Evaluate Necessity and Proportionality: It assesses whether the processing is necessary and proportionate to the intended purpose, and if less intrusive alternatives exist.

Implement Mitigation Measures: It helps determine and implement appropriate technical and organizational measures to mitigate identified risks.

Demonstrate Accountability: Conducting a DPIA is a key component of demonstrating accountability under GDPR, as it requires thorough documentation of the assessment process and the decisions made.

The European Data Protection Board (EDPB) emphasizes that DPIAs should include necessity assessments and decisions, advice from the Data Protection Officer, technical and organizational measures taken during AI model design to reduce identification risks, and documentation of the model's theoretical resistance to re-identification techniques. This proactive approach ensures that privacy considerations are not an afterthought but an integral part of AI development, safeguarding patient data and fostering trust.

6. Regulatory Oversight, Enforcement, and Real-World Implications

The regulatory landscape for AI in healthcare is rapidly evolving, marked by an increasing convergence of general data protection laws like GDPR with sector-specific and AI-specific legislation. This intricate web of regulations, coupled with growing enforcement actions, underscores the critical need for robust compliance frameworks in the industry.

6.1 Interplay with the EU AI Act: A Dual Regulatory Framework for Medical Devices

The European Union's Artificial Intelligence Act (EU AI Act), which entered into force on August 1, 2024, establishes a horizontal legal instrument designed to foster responsible AI development and deployment across the EU. This Act complements the GDPR, creating a dual regulatory framework, particularly for AI systems used in healthcare. The AI Act adopts a risk-based approach, classifying AI systems into categories: unacceptable risk (prohibited), high risk, limited risk, and minimal risk.

Crucially, most AI-powered medical devices (AIaMD) and in vitro diagnostic devices (IVDs) are classified as "high-risk AI systems" under the AI Act if they are safety components of medical devices or are medical devices themselves subject to conformity assessment. This means that these devices must comply with both the sector-specific Medical Device Regulation (MDR) or In Vitro Diagnostic Regulation (IVDR) and the horizontal EU AI Act. This simultaneous application encompasses a wide range of pre- and post-market elements.

The interplay between the AI Act and GDPR is significant, as the AI Act explicitly clarifies that GDPR always applies when personal data is processed by AI systems. There are substantial overlaps in obligations, particularly concerning:

Management Systems: Quality management systems under the AI Act are complementary to those under MDR/IVDR and should be integrated.

Data Governance: High-quality datasets for training, validation, and testing of AIaMDs require data governance practices fully compliant with GDPR, including transparency about original data collection purposes.

Technical Documentation and Record-Keeping: Both regulations require extensive documentation and logging of AI system lifecycle events, facilitating traceability and identification of risks like data biases.

Transparency and Human Oversight: Manufacturers must inform users that they are interacting with an AI system (unless obvious) and ensure human oversight mechanisms are in place, aligning with GDPR's Article 22 rights. The AI Act also provides a clearer right to explanation for high-risk systems in Article 86.

Accuracy, Robustness, and Cybersecurity: AIaMDs must be designed to achieve accuracy, robustness, and cybersecurity, aligning with GDPR's technical and organizational security measures.

Despite these synergies, challenges arise from potentially conflicting legal requirements and differing regulatory approaches. GDPR protects personal data from a fundamental rights perspective, while the AI Act is product safety legislation with targeted requirements based on risk. This divergence could lead to situations where an AI system complies with one law but not the other, or even infringes both simultaneously. European governments stress the need for coherent interpretation and enforcement, calling for joint guidelines and cooperation between responsible authorities to minimize administrative burdens and avoid contradictory decisions.

6.2 Regulatory Approval Processes for AI-Powered Medical Devices

The regulatory approval process for AI-powered medical devices (AIaMDs) is becoming increasingly complex, driven by the dual requirements of medical device regulations (MDR/IVDR) and the EU AI Act. As AIaMDs are largely classified as high-risk AI systems, they face heightened scrutiny.

Key requirements for regulatory approval include:

Clinical Evidence and Real-World Testing: High-risk AIaMDs must be supported by robust clinical evidence to demonstrate their safety, performance, and clinical benefit. This often involves clinical investigations or performance studies that constitute real-world testing under the EU AI Act.

Conformity Assessments: Most AIaMDs fall into risk classes (e.g., Class IIa MDR/Class B IVDR or above) that require a notified body to conduct quality management system audits, technical documentation reviews, and inspections to ensure compliance.

GDPR-Compliant Data Governance: High-quality datasets used for training, validation, and testing of AIaMDs must be fully compliant with GDPR. This includes transparency about the original purpose of data collection and adherence to data protection principles throughout the data lifecycle.

Addressing the "Many Hands" Problem: The development and deployment of AIaMDs involve multiple stakeholders, leading to a "many hands" problem where accountability for adverse outcomes can be diffused. Regulatory processes must establish clear accountability mechanisms to protect patient rights and ensure ethical care.

AI is also increasingly integrated into clinical trials themselves, streamlining processes and improving efficiency. For example, AI can assist with patient cohort selection, automate the drafting of patient consent forms (ICFs) by summarizing study protocols, and automate eligibility checks by extracting criteria from various documents. In these applications, ensuring compliance with GDPR (and HIPAA for US trials) is paramount, particularly regarding informed consent and data handling. While AI can significantly enhance clinical trial processes, challenges remain in ensuring the AI is safe, transparent, and effective, especially given the "lack of ubiquitous, uniform standards for medical data and algorithms".

6.3 GDPR Enforcement Actions and Fines: Lessons from Healthcare-Related Cases

Non-compliance with GDPR can result in severe penalties, including fines of up to €20 million or 4% of an organization's annual global turnover, whichever is higher. While not all enforcement actions directly target AI, several cases in the healthcare and life sciences sector highlight the critical areas where organizations fall short, often with implications for AI systems.

Specific healthcare-related enforcement cases and their reasons for fines include:

Unauthorized Data Transfer and Pseudonymization Failure (France): The French Data Protection Authority (CNIL) fined a company €800,000 for transferring customer data for research purposes without proper authorization and without true anonymization. The data, intended for studies and statistics in the health sector, was found to be merely pseudonymous, as re-identification was technically possible. This case underscores the strict interpretation of anonymization under GDPR, which is highly relevant for AI training datasets.

Website Tracking and Insufficient Technical Measures (Sweden): The Swedish DPA imposed a €3.2 million fine on a pharmacy for using "metapixels" on its website that, due to incorrect settings, transmitted customers' personal data to Meta without intent. The investigation found a failure to implement appropriate technical and organizational measures to prevent such incidents. This highlights the need for rigorous technical safeguards, especially when integrating third-party tools that might feed data to AI systems.

Cybersecurity Breaches and Lack of DPIA (Belgium, Poland, Croatia): Several hospitals and companies faced significant fines (e.g., €200,000 in Belgium, €336,000 in Poland, €190,000 in Croatia) due to ransomware attacks, server vulnerabilities, and irrevocable data loss (e.g., radiological images). Investigations often revealed failures to conduct Data Protection Impact Assessments (DPIAs), inadequate information security policies, and insufficient technical and organizational measures, including employee training and IT equipment updates. These cases emphasize that fundamental cybersecurity hygiene and proactive risk assessments are crucial for any organization handling sensitive health data, including those developing or deploying AI.

Email Data Breaches (Italy): The Italian DPA issued fines for data protection violations related to email, such as a medical technology company being fined €300,000 for sending emails where recipients' addresses were visible, potentially revealing health conditions (e.g., diabetes), and a healthcare institution fined €18,000 for inadvertently sending health data to the wrong recipient. These instances demonstrate that even seemingly minor data handling errors can lead to substantial penalties when sensitive health data is involved.

Beyond healthcare-specific cases, broader GDPR enforcement actions against tech giants like Meta, Facebook, TikTok, Amazon, and Google, with fines ranging from millions to over a billion Euros, demonstrate the seriousness with which regulators treat GDPR violations across industries. These cases, though not directly AI-focused, reinforce the importance of explicit consent, data minimization, robust security, and the right to erasure—all principles critical for AI development.

A particularly challenging issue emerging is the conflict between GDPR's strict limitations on processing sensitive personal data and the EU AI Act's encouragement of using such data for detecting and correcting bias in high-risk AI systems. GDPR generally prohibits processing sensitive data unless specific conditions (like explicit consent or public interest) are met, making it difficult for AI developers to access the very data needed to test for fairness. This creates a legal and philosophical dilemma, as complying with one framework might risk violating the other, leaving companies in a precarious position with hefty fines for non-compliance from both regulations. This highlights a key area where regulatory interpretations and potential reforms are needed.

7. Future Outlook and Strategic Recommendations

The profound impact of GDPR on AI in the healthcare industry is undeniable, shaping every facet from data collection to deployment and regulatory oversight. As AI continues its rapid evolution, the interplay between innovation and regulation will become even more intricate. Navigating this complex landscape requires a forward-looking perspective and a commitment to strategic, ethical, and compliant practices.

7.1 Emerging Trends in Healthcare AI Data Protection

The future of data protection in healthcare AI will be characterized by several key trends, driven by both technological advancements and evolving regulatory expectations:

Increased Focus on Algorithmic Fairness and Bias: As AI systems become more prevalent in healthcare decision-making, concerns about algorithmic bias and its potential to exacerbate existing health disparities are growing. Future regulations will likely intensify their focus on ensuring fairness, transparency, and explainability in AI algorithms, requiring developers to demonstrate that their systems do not discriminate against specific patient populations.

Advancements in Explainable AI (XAI): The demand for transparent and interpretable AI will continue to drive research and development in XAI technologies. As XAI improves, it will become easier to understand how AI systems arrive at their conclusions, facilitating regulatory assessment of safety and effectiveness, and building trust among clinicians and patients.

Wider Adoption of Privacy-Preserving Technologies (PPTs): Technologies such as Federated Learning, Differential Privacy, Homomorphic Encryption, and Synthetic Data Generation will become more sophisticated and widely adopted. These PPTs are crucial for enabling AI models to learn from decentralized or encrypted data without compromising individual privacy, thereby easing concerns about data privacy and fostering collaborative AI development.

Real-time Compliance Monitoring and AI-Enhanced IAM: The future will likely see the development of AI-enhanced Identity and Access Management (IAM) systems capable of continuously monitoring adherence to legal frameworks like GDPR and automatically adjusting policies in response to regulatory updates. This will facilitate real-time compliance monitoring and proactive mitigation of issues.

Integration with Blockchain for Enhanced Security: Blockchain technology, with its immutability and transparency, could be integrated into AI-enhanced IAM systems to provide an additional layer of security. This could offer tamper-proof audit trails and reduce reliance on centralized systems, enhancing trust in healthcare data management.

Emphasis on Data Sovereignty and Supply Chain Security: With the proliferation of AI solutions from diverse global sources, there will be an increased focus on ensuring that patient data remains within specific jurisdictions and is protected by robust privacy laws. Healthcare providers will need clear insight into the training data and methodologies used by third-party AI systems and their supply chains.

7.2 Potential Areas for Regulatory Evolution and Harmonization

The regulatory landscape for AI in healthcare is dynamic, with ongoing efforts to refine and harmonize existing frameworks:

Updates to Existing Healthcare Regulations: Existing privacy laws like GDPR (and HIPAA) may be updated to address AI-specific challenges more explicitly, including new requirements for data anonymization, breach notification, and patient consent in AI contexts.

Increased Collaboration and Harmonization: There will be a growing need for increased collaboration between different regulatory bodies (e.g., EU DPAs, FDA, MHRA) to ensure consistent interpretations and enforcement across jurisdictions. The development of joint guidelines and standardized templates is crucial to minimize administrative burdens and avoid conflicting outcomes between GDPR and the AI Act.

Resolution of GDPR-AI Act Conflicts: A critical area for evolution is the tension between GDPR's strict limitations on sensitive data processing and the AI Act's allowance for using such data for bias detection in high-risk AI systems. Regulators will likely need to provide clearer guidance or even legislative reform to reconcile these diverging approaches.

Risk-Based Regulatory Approaches: Regulators will continue to refine risk-based approaches to AI regulation, with more stringent requirements for high-risk systems used in critical care or diagnostics, fostering innovation while prioritizing patient safety.

Development of Ethical AI Frameworks: There will be a continued push to integrate ethical principles (fairness, accountability, transparency, human oversight) into legal mandates and regulatory frameworks, moving beyond aspirational guidelines to enforceable standards.

7.3 Recommendations for Stakeholders: Fostering Ethical and Compliant AI in Healthcare

For healthcare providers, AI developers, and policymakers, navigating the complex intersection of GDPR and AI requires a proactive and multi-faceted strategy:

For Healthcare Providers and Developers:

Embrace Privacy and Security by Design: Integrate privacy and security measures into the core design and architecture of AI systems from the earliest stages of development. This proactive approach ensures compliance is inherent, not an afterthought.

Conduct Thorough Data Protection Impact Assessments (DPIAs): Perform regular and comprehensive DPIAs for all AI projects involving personal data, especially sensitive health data. These assessments are crucial for identifying and mitigating privacy risks, evaluating necessity, and demonstrating accountability to regulatory authorities.

Prioritize Data Quality and Bias Mitigation: Invest in robust data validation, cleansing, and continuous monitoring processes to ensure the accuracy and representativeness of training data. Implement fairness-aware design principles and conduct regular algorithmic audits to identify and mitigate biases that could lead to discriminatory outcomes.

Invest in Explainable AI (XAI): Develop and deploy XAI techniques to provide clear, understandable explanations for AI-driven decisions. This is vital for building trust among patients and clinicians, enabling human oversight, and facilitating regulatory scrutiny, especially for "black box" models.

Implement Robust Data Governance and Access Controls: Establish clear data governance policies that define how AI processes patient information, who has access, and for how long data is retained. Apply strict role-based access controls and maintain detailed audit logs for accountability.

Explore Privacy-Preserving Technologies: Actively research and implement technologies like Federated Learning, Synthetic Data Generation, Homomorphic Encryption, and Differential Privacy. These tools offer powerful solutions for leveraging health data for AI development while minimizing privacy risks and enhancing GDPR compliance.

Ensure Transparent Communication and Patient Empowerment: Provide clear, informed, and revocable consent mechanisms for data collection. Inform patients about how their data is used by AI, the logic involved in automated decisions, and their rights to access, correct, or delete their data. This builds trust and fosters patient autonomy.

Stay Abreast of Regulatory Developments: Continuously monitor and adapt to evolving regulatory frameworks, including the EU AI Act and any updates to GDPR or national laws, particularly concerning AI in healthcare.

Foster Cross-functional Collaboration: Ensure close collaboration between legal, technical, and medical teams throughout the AI development and deployment lifecycle. Early involvement of legal and compliance experts can help interpret regulations and build privacy into the system from the start.

For Regulators and Policymakers:

Provide Clearer, Harmonized Guidance: Develop detailed, cross-sectoral guidelines on the interplay between GDPR and the EU AI Act, particularly concerning sensitive health data, re-identification risks, and bias detection. This will reduce legal uncertainty and administrative burden for organizations.

Promote International Cooperation: Foster greater collaboration among data protection authorities and other regulatory bodies globally to harmonize interpretations and enforcement practices for AI in healthcare, especially for cross-border data flows.

Support Research into Privacy-Enhancing Technologies: Encourage and fund research into and adoption of advanced privacy-preserving technologies that can enable AI innovation while safeguarding patient data. Consider regulatory sandboxes or "AI Airlocks" to test innovative solutions in a controlled environment.

Develop Adaptive Regulatory Frameworks: Recognize the rapid evolution of AI and design regulatory frameworks that are flexible enough to adapt to new technological advancements and emergent risks, rather than relying on static approval models.

By embracing these recommendations, stakeholders can collectively foster an environment where AI's transformative potential in healthcare is realized responsibly, ethically, and in full compliance with the profound demands of GDPR.

FAQ

1. What defines 'sensitive health data' under GDPR, and why does this classification significantly impact healthcare AI?

Under the GDPR, 'personal data' broadly encompasses any information relating to an identified or identifiable natural person. Within this, 'sensitive personal data' or 'special category data' includes 'data concerning health,' which explicitly covers an individual's physical or mental health information, diagnoses, treatments, medical opinions, test results, and even data from fitness trackers.

This classification profoundly impacts healthcare AI because the processing of such sensitive data is, in principle, prohibited unless a specific exemption applies (Article 9). This stringent rule stems from the understanding that health data processing carries "severe and unacceptable risks to fundamental human rights and freedoms," potentially leading to discrimination, stigmatisation, or even physical harm if misused or breached. Consequently, any AI system handling health data inherently faces elevated scrutiny and a significantly higher regulatory burden, demanding robust safeguards and meticulous compliance from the outset.

2. What are the foundational GDPR principles, and how do they directly challenge traditional AI development in healthcare?

The GDPR's foundational principles are designed to ensure lawful, fair, and transparent processing of personal data, protecting data subjects' rights. When applied to AI in healthcare, these principles create significant challenges:

Lawfulness, Fairness, and Transparency: AI systems must have a clear legal basis for processing, often explicit consent for sensitive health data, which must be "freely given, specific, informed and unambiguous." This clashes with AI's need for large, stable datasets, as consent withdrawal can necessitate costly model retraining. Furthermore, the "black box" nature of many advanced AI models conflicts with the transparency mandate to explain "how the decision was made" or provide "meaningful information about the logic involved."

Purpose Limitation and Data Minimisation: Data must be collected for "specific, explicit, and legitimate purposes," and only "the data necessary for the task" should be processed. This directly contradicts AI's "data hunger," as models often achieve optimal performance with vast and diverse datasets. Repurposing existing patient data for new AI research without a new legal basis or explicit consent is particularly challenging, adding substantial legal and administrative overhead.

Accuracy: Personal data used by AI must be "correct, complete, and up to date." In healthcare, this is critical for patient safety, as inaccurate AI outputs can lead to "misdiagnoses or incorrect treatment plans." This principle is also intrinsically linked to algorithmic fairness; biased or unrepresentative training data can perpetuate and amplify discriminatory outcomes.

Integrity and Confidentiality: Requires robust technical and organisational measures to protect data from unauthorised access, alteration, or loss. Given that healthcare AI often aggregates data, breach risks are amplified, demanding comprehensive encryption and strict access controls.

Accountability: Organisations must demonstrate compliance with all GDPR principles. The "many hands" problem in AI development (involving numerous stakeholders) complicates accountability, necessitating meticulously defined data governance frameworks and robust contractual agreements across the entire AI supply chain.

3. How does GDPR's stance on automated decision-making impact AI systems used for patient care?

Article 22 of the GDPR grants individuals the right not to be subject to a decision based solely on automated processing (including profiling) if it produces legal effects or similarly significantly affects them. This is highly relevant for healthcare AI involved in automated diagnoses, treatment recommendations, or patient management, where an AI's decision could have profound life impacts.

While exceptions exist (e.g., explicit consent, public interest), even then, suitable safeguards must be implemented. These safeguards include the right to obtain human intervention, express one's point of view, and challenge the decision. For decisions based on sensitive health data, these requirements are even more rigorous.

Recital 71 reinforces the importance of transparency, implying a "right to explanation" for automated decisions. This legal mandate presents a significant technical hurdle for "black box" AI models, necessitating the development of Explainable AI (XAI) techniques. Fostering patient and clinician trust, essential for AI adoption, hinges on the ability to comprehend and contest AI-driven recommendations, making XAI not just a regulatory measure but a fundamental ethical requirement.

4. What are the key data challenges in developing healthcare AI under GDPR, particularly regarding collection, sharing, and cross-border transfers?

Developing healthcare AI under GDPR faces several critical data challenges:

Data Collection and Training Limitations: AI models need vast data, but GDPR mandates explicit, informed, and revocable consent, which is administratively complex. The risk of re-identification, even from supposedly anonymised data, is a major concern, as achieving true anonymisation for complex health data is "difficult, if not impossible." Unlawfully processed data can also invalidate subsequent AI deployment, necessitating a "Privacy-by-design" approach.

Data Interoperability and Sharing: Healthcare data often remains "locked within different platforms" due to inconsistent formats and proprietary systems, creating data silos. This fragmentation hinders AI training. While interoperability aims to improve data sharing, it must not compromise privacy, requiring secure transmission, consent management, and strict access controls for sensitive health data. GDPR's purpose limitation principle further complicates data sharing for AI research, often requiring rigorous compatibility assessments or new legal bases.

Cross-Border Data Transfers: Transferring personal data outside the European Economic Area (EEA) requires that the destination country ensures an "essentially equivalent" level of data protection. Mechanisms like Standard Contractual Clauses (SCCs) and Binding Corporate Rules (BCRs) facilitate this, but often require a Transfer Impact Assessment (TIA) to evaluate the recipient country's legal landscape. For global cloud-based AI, mapping these flows and implementing robust technical safeguards (like strong encryption and pseudonymisation) are crucial.

5. How do privacy-preserving technologies (PPTs) enable compliant AI innovation in healthcare?

Privacy-preserving technologies (PPTs) are crucial for balancing AI's data demands with GDPR's strict privacy requirements:

Anonymisation vs. Pseudonymisation: Anonymisation permanently removes identifying information, taking data out of GDPR's scope. However, achieving absolute anonymisation for health data is extremely difficult. Pseudonymisation replaces identifiers with codes, keeping data within GDPR's scope but significantly minimising risks, ideal for longitudinal studies.

Federated Learning (FL): Trains AI models across decentralised data sources without centralising raw patient data. Only encrypted model updates are shared, enhancing data privacy, complying with regulations, and enabling collaboration among institutions without compromising individual data security.

Homomorphic Encryption (HE): Allows computations directly on encrypted data without decryption, ensuring data remains encrypted at rest, in transit, and during processing. This enables secure medical research, genomic data analysis, and confidential AI-as-a-Service, significantly enhancing GDPR compliance, though it is computationally intensive.

Differential Privacy (DP): Adds quantifiable noise to datasets or query results, making it difficult to extract sensitive information about individuals, offering strong, provable privacy guarantees for aggregate analysis and AI training.

Synthetic Data: Artificially generated datasets that mimic real-world data's statistical properties without containing identifiable information. This overcomes data scarcity, facilitates secure sharing and collaboration, mitigates re-identification risks, and can even enhance AI quality by being free from real-world biases.

6. What is the role of 'Privacy by Design' and Data Protection Impact Assessments (DPIAs) in achieving GDPR compliance for healthcare AI?